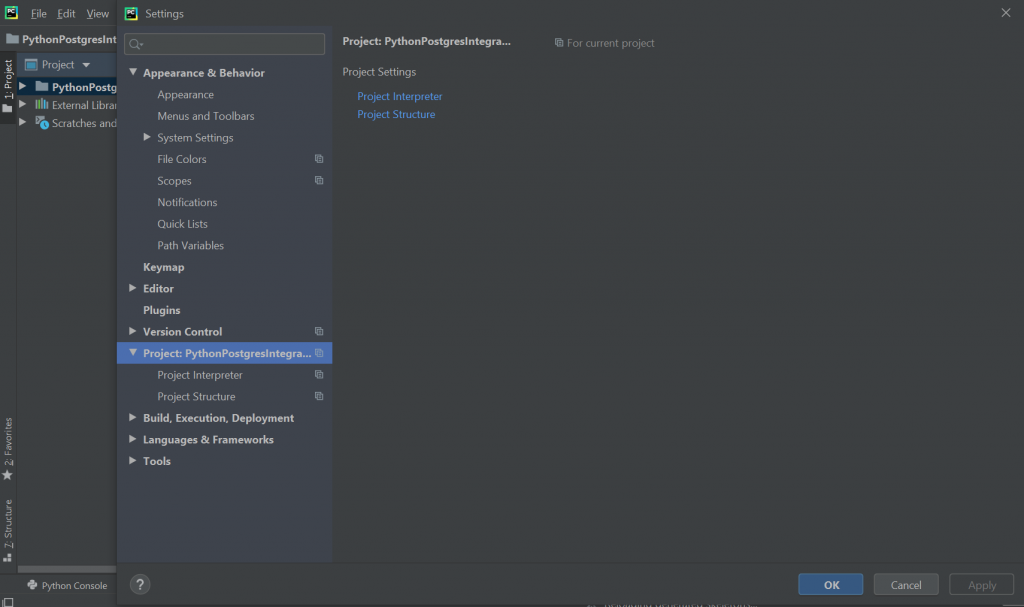

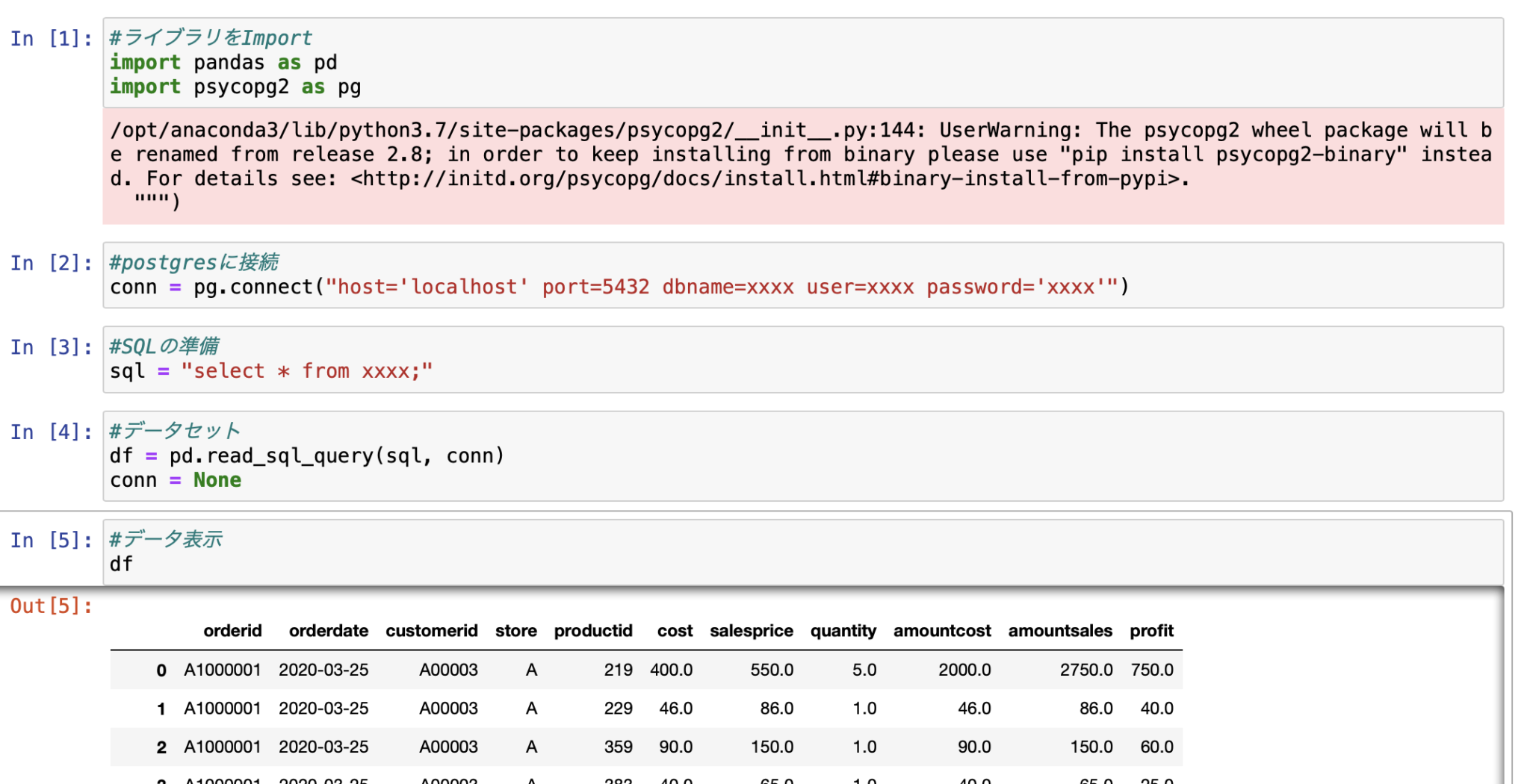

Query = "INSERT INTO %s(%s) VALUES(%%s,%%s,%%s)" % (table, cols)ĭef execute_batch(conn, df, table, page_size=100): # Create a list of tupples from the dataframe values Using cursor.executemany() to insert the dataframe Step 3: The seven different ways to do a Bulk Insert using Psycopg2 Print('Connecting to the PostgreSQL database.')Įxcept (Exception, psycopg2.DatabaseError) as error: """ Connect to the PostgreSQL database server """ import pandas as pdĬsv_file = "./data/global-temp-monthly.csv" What is nice about this dataframe is that it contains string, date and float columns, so it should be a good test dataframe for bench-marking bulk inserts. The data for this tutorial is freely available on, but you will also find it in the data/ directory of my GitHub repository. } Step 2: Load the pandas dataframe, and connect to the database Step 1: Specify the connection parameters # Here you want to change your database, username & password according to your own values

copy_from() – there are two ways to do this – view postįor a fully functioning tutorial on how to replicate this, please refer to my Jupyter notebook on GitHub.In this post, I compared the following 7 bulk insert methods, and ran the benchmarks for you: It becomes confusing to identify which one is the most efficient. There are multiple ways to do bulk inserts with Psycopg2 (see this Stack Overflow page and this blog post for instance).

If you have ever tried to insert a relatively large dataframe into a PostgreSQL table, you know that single inserts are to be avoided at all costs because of how long they take to execute.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed